Camera vs LIDAR Navigation: What’s the Difference?

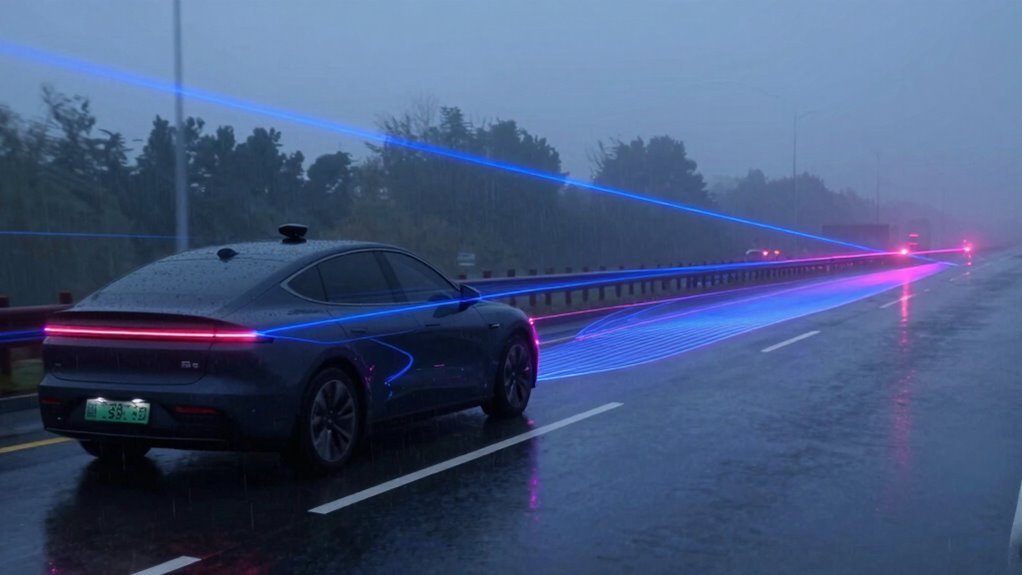

You’re zipping down the road, and while your car’s cameras snap pics like a selfie addict, LIDAR’s out there spinning like a tiny disco ball, mapping everything in 3D with crazy precision. This key difference in camera vs LIDAR navigation defines how self-driving systems perceive the world around them.

Cameras see colors and signs but fumble in rain or dark, relying heavily on visible light. In contrast, LIDAR laughs at night and drizzle, using laser pulses to nail distances within centimeters regardless of lighting.

It’s not really a battle—think superhero team-up where each power fills the other’s gaps. Together, camera vs LIDAR navigation systems create a more reliable, accurate view of the road, and trust us, the next level’s even cooler.

How Cameras Work in Self-Driving Cars

While your eyes blink, cameras on a self-driving car are already soaking in the world—24/7, no coffee needed.

You’ll find them perched on windshields, bumpers, and side mirrors, teaming up to watch every angle like a hawk with six heads.

These smart lenses see colors, signs, lanes, and cars just like you do—only they never daydream or get tired.

Using AI brainpower, they turn flat 2D images into smart guesses about depth and speed.

Fancy chips crunch the data fast, mixing old-school image tricks with neural networks that learn like students acing a test.

Whether tracking a pedestrian or reading “Stop,” they’re always alert—no yawns, no excuses.

Some even watch *you* to make sure you’re paying attention.

Sure, they struggle in heavy rain or darkness, but with help from other sensors, they’ve got the road covered.

It can process scenes rapidly, enabling the car to brake on its own when obstacles are detected.

Pretty cool for a bunch of tiny eyes!

How LIDAR Powers Autonomous Vehicle Vision

Ever wonder how a self-driving car “sees” the world in sharp, 3D detail, even in the dark?

It’s all thanks to LIDAR—your car’s secret night-vision superhero.

You zap out invisible laser pulses millions of times per second, measure how fast they bounce back, and boom: a detailed 3D map appears.

You build point clouds so precise, they sketch every curb, pedestrian, and rogue shopping cart in real time.

Rain or shine, day or night, you’re scanning 360 degrees—like having eyes on the back of your head (and the front, and sides).

You spot a cyclist two football fields away, track their speed, and guess their next move.

Even in heavy rain, you adjust like a pro.

You’re not just seeing; you’re *understanding*.

And at highway speeds, you keep the ride smooth and safe.

Sure, you’re a bit pricey—worth every penny when you’re basically giving cars X-ray vision.

Who needs sunglasses when you’ve got lasers?

This precision comes from creating detailed 3D “point clouds” that capture shape and depth with remarkable accuracy point clouds.

Camera vs LIDAR: Accuracy, Range, and Environment

You’ve seen how LIDAR turns cars into 3D artists, painting the world point by point—even in pitch darkness. Now let’s break down how it stacks up against cameras when it comes to accuracy, range, and environment.

| Feature | LIDAR | Camera |

|---|---|---|

| Accuracy | Centimeter precision | Less precise, needs guesswork |

| Range | Up to 300 meters | Usually under 10 meters |

| Environment | Works day or night, no problem | Struggles in dark or glare |

| Data Type | 3D point clouds, super detailed | 2D images, rich in color |

LIDAR nails exact distances and shapes, no matter the light, while cameras see color and signs but miss depth. You’re getting rock-solid spatial awareness with LIDAR—or a visual storyteller with a camera. Pick your champion!

Can Cameras Handle Rain or Darkness?

So, what happens when the sky opens up and the roads turn into shiny, rain-slicked mirrors—can your car’s cameras still see where they’re going? Not really, and here’s why.

Rain streaks blur everything, droplets cloud the lens, and wipers swipe right through the frame—talk about bad timing!

Heavy rain can slash visibility by 80%, and at highway speeds, your camera might as well be squinting.

Darkness isn’t kind either—regular cameras struggle at night like you without your glasses.

Add rain at night, and the image flickers like a glitchy horror film.

Cold, foggy lenses or frost? Another layer of headache.

Even steering gets wobbly, veering over 0.7 degrees off track.

Yeah, deraining tech helps, but in pouring rain or deep night, your camera’s basically guessing.

Sorry, but Mother Nature doesn’t care about your smart car.

Where LIDAR Beats Cameras in Safety

How does your car “see” danger before you even notice it?

With LIDAR, it’s like giving your vehicle super vision.

You get precise distance tracking—down to the centimeter—so it spots obstacles fast, even at highway speeds.

While cameras can struggle in the dark, LIDAR owns the night, working perfectly in total blackout or blinding sun.

No more worrying about shadows or glare!

It builds a 3D map of everything around you, so it knows a pedestrian isn’t just a blur but a real person stepping too close.

At high speeds, that extra range gives your car more time to react—like having extra eyes that never blink.

And in crowded cities? LIDAR cuts through the chaos with laser-precise accuracy.

Honestly, it’s like your car’s got a sixth sense—except it’s real, and it’s *really* cool.

Safety just got a serious upgrade.

The Future of Self-Driving Sensors: Fusion Wins

LIDAR might give your car superhero night vision, but even superheroes work better as a team.

You’re not just relying on one sensor—you’re fusing camera, radar, ultrasonic, and LIDAR data to see the world smarter.

Think of it like giving your car eyes, ears, and instincts all at once.

Early fusion blends raw data, like matching 3D points with 2D images, boosting depth accuracy by up to 30%.

Late fusion compares final outputs, like tracking a pedestrian’s speed and shape across sensors.

When one fails, others step in—no panic, just smooth recovery.

New hardware, like Kyocera’s all-in-one sensor, kills parallax and superimposes views perfectly.

Together, they create richer, safer 3D maps, beat low-light struggles, and edge closer to full autonomy.

Fusion isn’t just smart—it’s your car’s secret weapon.

The future isn’t camera vs. LIDAR.

It’s both, working in harmony.

Frequently Asked Questions

How Much Does LIDAR Cost Compared to Cameras?

You’re looking at a big price jump with LIDAR—cameras cost hundreds, while LIDAR often runs into thousands.

It’s like comparing a snack to a full meal!

Lasers and precision parts make LIDAR pricier, and don’t forget the heavy-duty computers it needs.

Cameras?

Cheap, easy to use, and get the job done.

You’ll save cash *and* hassle going with cameras for most jobs.

Are There Privacy Concerns With Camera-Based Systems?

Yeah, there are privacy concerns with camera-based systems—you’re right to wonder!

Hackers can sneak in if security’s weak, and nobody wants their commute turned into a viral video.

You’re usually fair game in public, but hidden cams in private spots? Big no-no.

Laws let traffic cams catch speeders, but they shouldn’t stalk you.

Good encryption and clear rules keep things safe, smart, and way less creepy.

Do Any Self-Driving Cars Use Only Cameras?

Yes, you’re right—some self-driving cars *do* run on cameras alone!

Tesla zooms down highways using just cameras, like a hawk spotting prey, while Wayve casually cruises UK streets, all eyes and no radar.

It’s like teaching a robot to drive using only what it sees, no fancy lidar crutches.

Humans do it, why not bots?

Sunny days? Perfect.

Heavy fog? Uh-oh—we see why others still pack extra sensors!

Is LIDAR Affected by Bright Sunlight?

Yeah, lidar can struggle in bright sunlight—those sneaky sunbeams flood the sensor and muddy the readings.

But don’t worry, engineers fight back with smart tricks like ultra-narrow filters and fancy shutters.

Some even use brainy signal processing to toss out the noise.

You’d be amazed how well it still works, sunny or not.

Think of it like sunglasses for robots—sun’s blazing, but lidar’s still cool, calm, and laser-focused!

Can Camera Systems Recognize Hand Signals From Pedestrians?

Yes, you can totally trust camera systems to recognize hand signals from pedestrians—they’re pretty smart!

Using clever tech like infrared and depth sensors, they spot gestures even in tricky lighting.

With accuracy hitting over 99% in some cases, they’re like eagle-eyed helpers watching for waves, stops, or thumbs-up.

Plus, they work fast, respond in real-time, and don’t get bored—unlike your friend who always misses the signal at the drive-thru!

Conclusion

You’re cruising down the road, sunlight glinting off rain-slicked asphalt—cameras catch the colors, the signs, the world in high-def. But when fog rolls in like a sneaky blanket, LIDAR’s laser whispers map every curve in 3D. One sees like you, the other senses like a bat with goggles. Joke’s on us—neither wins alone. Together? They’re the dream team, high-fiving down the highway to the future.

References

- https://www.bosch.com/stories/mpc3-self-driving-car-camera/

- https://www.hesaitech.com/benefits-of-lidar-vs-cameras-in-self-driving-cars/

- https://www.autonomousvehicleinternational.com/features/is-camera-only-the-future-of-self-driving-cars.html

- https://blogs.nvidia.com/blog/how-does-a-self-driving-car-see/

- https://www.mobileye.com/blog/autonomous-vehicle-day-the-self-driving-stack/

- https://industrial.panasonic.com/ww/ds/ss/technical/ap2

- https://comma.ai

- https://www.autoweek.com/news/a36190274/what-lidar-is/

- https://imagry.co/articles/vision-vs-lidar-the-battle-for-autonomous-drivings-future/

- https://www.aeye.ai/blog/elon-musk-is-right-lidar-is-a-crutch/